SaaS MVP Teardown: Lessons from a Live Build

Building revenue-generating apps is faster than ever with AI tools, but speed doesn’t guarantee success. This teardown analyzes real examples of MVPs built in under a month, highlighting what worked, what didn’t, and lessons learned. Key takeaways include:

- Speed vs. Planning: Quick builds (e.g., 9–22 days) saved time but often lacked proper user outreach or marketing plans.

- Common Failures: Poor timing, unclear onboarding, and database mistakes led to zero signups or operational issues.

- AI’s Role: AI tools like Cursor and Bolt AI accelerated development by 10x but required clear planning and oversight.

- Focus on Core Features: Cutting non-essential features helped builders solve specific problems efficiently.

- User Feedback Over Revenue: Early-stage feedback proved more critical than immediate income.

For more product insights and coding tips, check out our latest teardowns.

Quick Stats:

- Build Times: 9–22 days

- Budgets: $75–$500

- Outcomes: $0–$1,200 in monthly revenue

The verdict? Speed matters, but success depends on solving real problems, planning ahead, and engaging users effectively.

I Built an ENTIRE SaaS MVP in under 2 hours (ISS CLIPS)

sbb-itb-c336128

Project Overview: The SaaS MVP

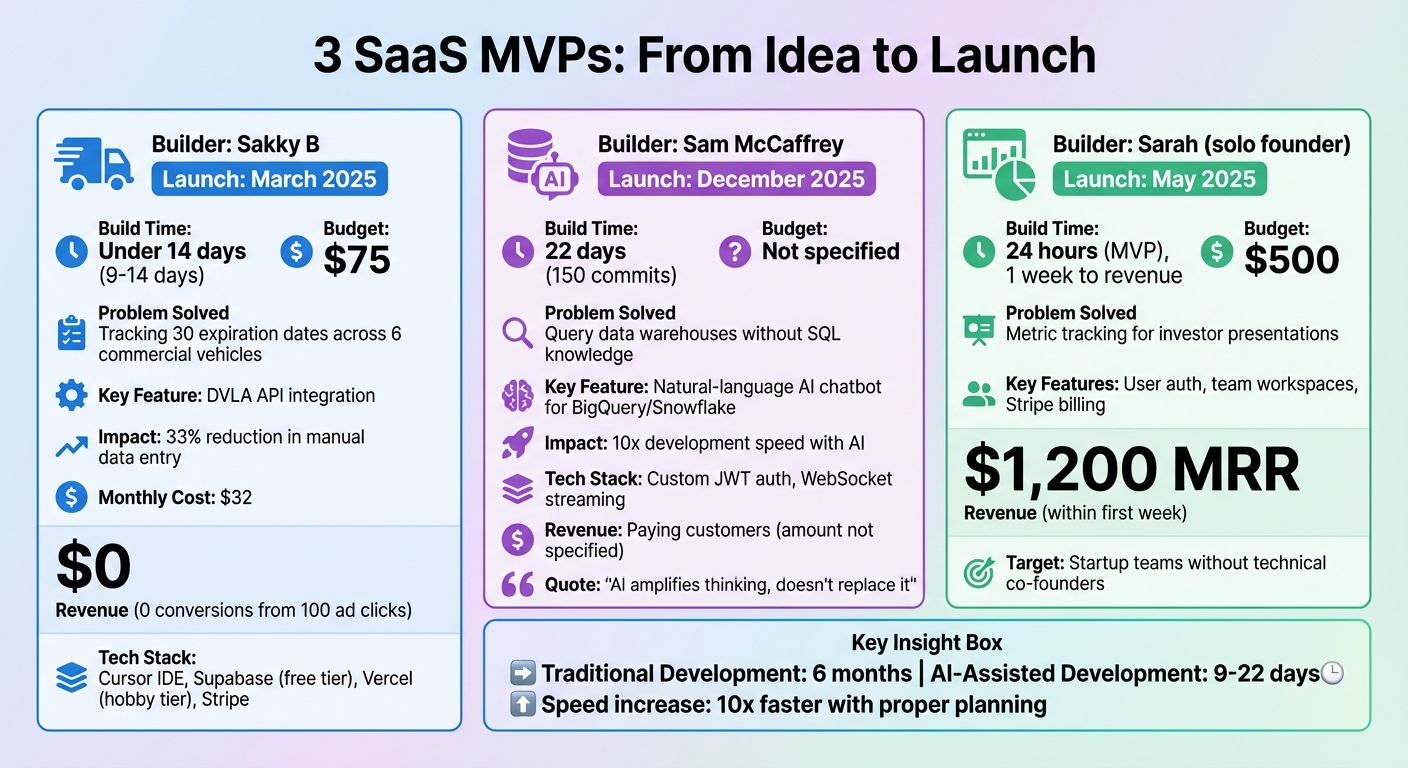

SaaS MVP Build Comparison: 3 Projects, Timelines, Costs, and Revenue

Problem and Target Users

These projects tackled three distinct challenges. First, the Vehicle Expiry Tracker, designed by Sakky B in March 2025, addressed a compliance nightmare for Kent Gurkha, a UK-based cleaning company managing 30 different expiration dates (MOT, Road Tax, Insurance, PMI) across six commercial vehicles. Previously reliant on spreadsheets, they struggled with manual tracking. Sakky’s tool automated alerts using the DVLA API, reducing manual data entry by 33% [3].

Next up is Synapse, launched by developer Sam McCaffrey in December 2025. This tool solved a common issue for teams working with complex data warehouses like BigQuery and Snowflake: the need for SQL expertise. McCaffrey’s solution was a natural-language AI chatbot that enabled users to query company data without writing a single line of code [4].

Finally, there’s VentureTrack, created by solo founder Sarah in May 2025. She focused on startup teams who needed to track metrics for investor presentations but lacked a technical co-founder or a sizable budget. Within 24 hours, Sarah developed a working product that included user authentication, team workspaces, and Stripe billing. By the end of the first week, she had already hit $1,200 in monthly recurring revenue [8].

Each of these projects had unique challenges, shaping their development process and outcomes.

Build Goals and Constraints

Speed and adaptability were at the heart of these projects, with each one built under tight constraints. For example, McCaffrey developed Synapse in just 22 days with 150 commits - an effort that might take a traditional team six months. His strategy? He focused on efficiency, even customizing JWT authentication to avoid delays with third-party platforms [4].

Sarah faced an even tighter timeline. With only 48 hours to prepare for an investor demo and a $500 budget, she delivered a functioning product. By the end of the first week, her project was already generating $1,200 in monthly recurring revenue [8].

Sakky B’s Vehicle Expiry Tracker was built in under two weeks for just $75. The bigger challenge here wasn’t just time - it was proving that a non-engineer could create a functional B2B SaaS product using AI tools like Cursor [3]. As McCaffrey aptly explained:

"AI amplifies thinking, doesn't replace it. Architecture decisions were still mine. AI helped me execute at 10x speed." [4]

These projects weren’t about creating polished, award-winning products. Instead, they were experiments driven by constraints, designed to test ideas quickly and effectively. You can see similar results in this SaaS build showcase.

Build Process: From Idea to Deployment

Ideation and Feature Selection

When deciding on features, the creators focused on what users would actually pay for, rather than aiming for technical perfection. Take Sam McCaffrey's Synapse, for example. It was designed as a multi-tenant AI data warehouse query platform, prioritizing core querying features over unnecessary extras. On the other hand, Sakky B's Vehicle Expiry Tracker homed in on critical integrations, such as the DVLA API. This integration alone cut manual data entry by 33%, while less essential tools like reporting features were put on hold [3]. The goal was clear: build the smallest sellable product. This approach kept development fast and focused.

Tech Stack and Tools

AI-assisted development played a big role in speeding up timelines across all three projects. Sakky B relied on Cursor as his main IDE, using Supabase's free tier for database and authentication, and Vercel's hobby tier for hosting [3]. Meanwhile, McCaffrey's Synapse included custom-built JWT authentication and WebSocket-based streaming, all completed in just 150 commits over 22 days. Reflecting on the process, McCaffrey noted:

"The future isn't AI replacing developers. It's developers with AI shipping what used to require teams." [4]

For payments, Stripe was the go-to option for most, though Senko Rasic's MarkShot project chose LemonSqueezy to simplify tax compliance [2]. Tools with built-in deployment pipelines were a common choice, as they helped skip lengthy setup times. These decisions directly influenced how quickly each project could move from development to a functional MVP.

Deployment and Launch

Deployment brought its own set of challenges, often revealing gaps between AI-generated solutions and real-world production needs. Sakky B faced recurring issues during deployment; fixing one bug often caused unexpected problems elsewhere. For example, his Vercel deployment initially blocked necessary traffic due to overly strict middleware, requiring manual adjustments [3]. McCaffrey's Synapse ran into delays caused by persistent CORS errors:

"Some days were 15+ commits of pure shipping. Others were 3 commits of 'why is CORS still broken.'" [4]

Senko Rasic's MarkShot had its own hurdles, including a major delay when the PayPal sandbox crashed during webhook testing. Despite the core API functioning properly, this issue became the project's biggest time sink [2]. These experiences underline the need for thorough manual testing, especially with third-party integrations and AI-generated fixes.

Despite these challenges, the results were impressive. Sakky B's Vehicle Expiry Tracker went live in under two weeks, costing just $75 [3]. Meanwhile, McCaffrey's Synapse transformed from an empty repository to a product with paying customers in only 22 days [4].

Key Trade-offs and Decisions

Scope vs. Speed: What Was Cut

When speed takes priority, some features inevitably get left on the cutting room floor. For McCaffrey's Synapse, backend usage tracking was skipped entirely. Instead, a simpler frontend-only subscription guardrail was implemented, which counted rendered data. This decision saved a lot of development time while still delivering the core functionality users needed. Similarly, Sakky B's Vehicle Expiry Tracker delayed adding extras like reporting dashboards and analytics. Instead, the focus was on integrating the DVLA API - a move that highlighted the importance of cutting non-essential features to solve the primary user problem effectively [3].

Platform and Tool Selection

Choosing between building custom solutions and using third-party services often boils down to control versus convenience. McCaffrey opted to hand-code JWT authentication for Synapse rather than relying on a service like Auth0. He explained his reasoning:

"Hand-rolling isn't always slower. Owning the code meant moving exactly as fast as I could type. No support tickets. No 'not in your plan.'" [4]

Tools like Cursor helped speed up this custom development process, offering a way to quickly implement features that might have otherwise been time-consuming for a solo developer. For those looking to build and launch full-stack products without deep coding knowledge, AI-native environments are lowering the barrier to entry. Still, there were situations where third-party services made more sense. For example, using Stripe for payments provided automatic PCI DSS compliance - a crucial advantage, especially considering that 62% of SaaS startups underestimate compliance needs, which can lead to costly delays [11].

Here’s a quick comparison of the trade-offs between custom builds and third-party solutions:

| Aspect | Custom Build | Third-Party / Pre-Built |

|---|---|---|

| Development Speed | 2–6 months typical [10] | Days to weeks [3] [4] |

| Upfront Cost | $25,000–$75,000 [10] | $75 total (using free tiers) [3] |

| Control | Complete ownership [4] | Limited by vendor terms [11] |

| Compliance | Manual (high risk) [11] | Built-in (e.g., PCI DSS via Stripe) [11] |

| Monthly Maintenance | High (in-house team) | Low (managed by provider) |

Sometimes, a mix of both approaches worked best. Sakky B demonstrated this with a hybrid setup, combining managed services like Supabase (for databases and authentication), Vercel (for hosting), and Stripe (for payments) with a custom DVLA API integration. This strategy kept monthly costs as low as $32 while still allowing room to create unique features [3]. These carefully made choices laid the groundwork for the post-launch outcomes that follow in the next section.

Post-Launch Results: What Worked

Metrics and User Feedback

The results varied across the different MVPs, but some tools made a notable impact. SaaStr.ai managed to draw in between 15,000 and 20,000 users each month, while the Startup Valuation Calculator processed an impressive 250,000 valuations within just a few weeks of its debut [6]. These numbers highlighted how tools that address specific problems can quickly gain traction.

Revenue insights, though modest, were revealing. For instance, ClarifyPDF earned $45.42 during its first week with 1,000 visitors and eventually reached $320.83 in revenue after 11,600 visitors over three months [1]. Developer Farez learned a key lesson about pricing: cutting the price of a PDF from $1.99 to $0.99 doubled the number of customers. While the revenue remained the same, the increase in users provided valuable feedback [1].

"Early on, feedback trumped revenue. Halving my price doubled customer numbers while revenue remained unchanged."

- Farez, Developer of ClarifyPDF [1]

Not every tool hit the mark, though. For example, Vehicle Expiry Tracker failed to convert any customers despite getting 100 clicks from Google Ads [3]. Similarly, ClearNoteLab launched on Hacker News but didn’t generate a single signup, illustrating how factors like timing and social proof can significantly influence user engagement [5].

These insights - both successes and setbacks - helped shape the improvements that followed.

What Worked Well

The performance metrics were just one part of the story. Real-world practices also played a crucial role in driving success. One standout factor was the speed of iteration. Developers using AI tools like Cursor were able to build production-ready apps in just 15–22 days, compared to the months such projects typically take [4][2]. For example, Sam McCaffrey launched Synapse, a multi-tenant SaaS platform featuring custom authentication and Stripe billing, in only 22 days with 150 commits [4]. This rapid development allowed teams to gather user feedback while competitors were still ironing out plans.

Tools like Crisp.io live chat added another layer of insight by providing real-time user feedback that traffic metrics alone couldn’t capture [1][13]. These direct interactions helped developers quickly identify and address friction points. A great example of this approach is the Clarity AI learning platform, which achieved a 100% success rate across 533 tests before its early access launch [13].

Focusing on core functionality over unnecessary features also proved effective. By prioritizing essential capabilities, developers were able to deliver solutions that addressed user needs without overcomplicating things. McCaffrey, for instance, skipped complex backend usage tracking and instead implemented simpler frontend guardrails, saving time without compromising the user experience [4]. Another example is the Vehicle Expiry Tracker, which integrated the DVLA API to provide a straightforward, functional tool that solved a specific problem [3][4].

This combination of speed, simplicity, and user-focused development laid the groundwork for these projects’ successes.

Failures and Lessons Learned

Main Failures and Root Causes

Not every project hits the mark. Take ClearNoteLab, for example - a meeting note PDF generator developed in just 9 days with a budget of $200. Launched on Hacker News on December 22nd, it gained only a single upvote and zero signups. Jack, the founder, quickly realized that timing and distribution were just as critical as the product itself:

"Building is easy. Distribution is hard. I can ship a working SaaS in 9 days for $200, but I can't make anyone care about it" [5].

This underscores a common pitfall: focusing on rapid development without a solid plan for reaching users.

Learnflow AI, another project, faced a different challenge. Founder Shola Jegede launched a voice-first AI tutoring app that initially attracted users, only to see them drop off quickly. The issue? Miscommunication during onboarding. Users didn’t understand that voice calls required credits, and moments of silence while the AI processed responses were mistaken for app failures. By adding a clear "Start Session (1 Credit)" button and animations to indicate the AI was actively "listening", support inquiries dropped by 70% [14].

Then there’s OmniTrackr, a file monitoring tool built using Claude. Founder Manjula Liyanage encountered a major setback due to poor database design. He had consolidated connection info, schedules, and tracking data into a single table. When it came time to reuse S3 connections for other features, the database structure became a bottleneck, forcing days of refactoring into five normalized tables and migrating data. As Liyanage explained:

"The quality of your AI-built MVP depends entirely on how well you prompt it. Make the wrong assumptions early, and you'll spend days (and thousands of tokens) refactoring" [15].

Practical Takeaways for Builders

These setbacks reveal key lessons for avoiding similar mistakes. The overarching theme? Speed without proper planning often leads to problems. Here’s how to sidestep some of the most common issues:

- Document your architecture early. Setting up a

/docsfolder with design documents before you start coding can save you from future confusion. It helps you avoid the "mystery box" scenario where you forget the reasoning behind key decisions [15]. - Normalize your database from the start. Avoid building monolithic tables that might seem quicker upfront but will create headaches when scaling or adding new features later.

When it comes to onboarding, clarity is king.

- Integrate guidance directly into your interface. Replace vague labels like "Start" with more specific ones like "Start Session (1 Credit)" to manage expectations.

- Use visual feedback. Animations or live transcripts reassure users that the system is working, not frozen [14].

- Prompt users to upgrade at the right time. Well-timed nudges can make all the difference in converting free users into paying customers.

Lastly, timing your launch is just as important as the product itself. Avoid launching during holidays when attention is scarce, build an audience in advance, and include even basic testimonials to establish trust [5]. These lessons offer a roadmap for navigating the challenges of rapid product development.

Conclusion: Lessons from This SaaS MVP Build

Speed is only valuable when paired with learning. Back in April 2025, developer Senko Rasic built MarkShot - a SaaS API designed to convert webpages into markdown - in just 25 hours using Claude Code. That’s about one-tenth the time a traditional build would take. Rasic’s success highlights a crucial point: rigorous pre-planning allows AI tools to amplify development speed. His approach? He created a detailed technical specification before letting AI write even a single line of code [2].

This "plan first, implement second" philosophy ensures clarity throughout the development process. While AI tools can speed up implementation by a factor of ten, they can’t replace a solid foundation of planning and understanding. As Jeff Morhous wisely puts it:

"AI tools, no matter how clever, should never take the place of a firm understanding of what you're building" [7].

This clarity not only accelerates development but also sets the stage for incorporating valuable real-world insights.

Early feedback is more important than early revenue. For instance, when Farez reduced his product’s price from $1.99 to $0.99, purchases doubled, and so did the feedback, all while revenue remained steady [1]. This example drives home the value of user input during the early stages. Capturing this feedback is essential - tools like chatboxes and session recording software should be installed from day one to gather immediate, actionable insights. These insights, coupled with a rapid iteration process, are often the difference between a successful MVP and a failed launch.

Taking these lessons further, platforms like ClackyAI are stepping in to handle the heavy lifting. They generate full-stack applications from natural language descriptions in minutes, complete with admin panels, Stripe integrations, authentication, and AI models - all without requiring configuration [9]. Even better, ClackyAI’s self-healing AI detects and fixes bugs automatically, cutting down debugging time, which typically eats up 60% of production-ready development efforts [6].

The path is clear: ship early, iterate quickly, and let real users challenge your assumptions. As Ethan Bloom points out:

"Most founders wait too long and overengineer, but the biggest wins come once you start seeing what real users need and iterate fast" [12].

The tools to move faster than ever are here - the real question is whether you’re ready to launch before you feel fully prepared.

FAQs

How do AI tools speed up building a SaaS MVP?

AI tools can significantly accelerate the process of building a SaaS MVP by automating critical parts of development. Take AI coding agents, for instance - they can create functional prototypes based on simple prompts, cutting down the need for extensive manual coding. These tools can handle everything from validating ideas and building the product to testing and deploying it, making it easier to iterate and launch faster.

AI-powered platforms also simplify workflows by automating repetitive tasks like debugging and testing. This frees up time for founders and small teams to fine-tune their product. The added efficiency not only lowers costs but also allows startups to test their ideas quickly - an essential advantage in the early stages of development. Tools such as ClackyAI even offer comprehensive support, ensuring the final product is ready for deployment and real-world use.

What mistakes should I avoid when quickly building a SaaS MVP?

When you're building a SaaS MVP quickly, the key is to focus on what really matters. Avoid the temptation to overbuild by cramming in too many features. Stick to the core offering - this not only speeds up development but also makes it easier to gather meaningful feedback from your users. Simplicity is your best friend when it comes to iterating faster.

Another pitfall to watch out for is skipping user onboarding. If users don’t know how to navigate your product or see its value right away, they’ll likely feel frustrated and leave. Take the time to craft a straightforward, embedded onboarding process that guides users from the start and helps them see why your product is worth their time.

Lastly, don’t overlook your backend infrastructure. A rushed or fragile setup may seem fine initially, but it could cause major headaches as your product scales. Investing in a reliable and scalable backend from the beginning will save you from costly technical issues and delays down the road.

Why is user feedback more important than early revenue when launching a SaaS MVP?

User feedback plays a key role in shaping a SaaS MVP, offering a window into what users genuinely need and revealing areas where the product can improve. While early revenue might look encouraging, it doesn’t always guarantee that the product effectively solves a problem or aligns with market demands.

By prioritizing feedback, you can fine-tune your product, tackle user pain points, and establish a solid base for future growth. Early revenue is helpful, but true, lasting success comes from building a product that connects with users and delivers on their expectations.